使用 SimCLR 进行对比预训练的半监督图像分类

作者: András Béres

创建日期 2021/04/24

最后修改日期 2024/03/04

描述:使用 SimCLR 进行对比预训练,在 STL-10 数据集上进行半监督图像分类。

简介

半监督学习

半监督学习是一种处理 **部分标记数据集** 的机器学习范式。在现实世界中应用深度学习时,通常需要收集大量数据集才能使其正常工作。然而,虽然标记成本与数据集大小呈线性关系(标记每个示例需要恒定的时间),但模型性能仅与数据集大小呈 **次线性** 关系。这意味着标记越来越多的样本变得越来越不划算,而收集未标记的数据通常很便宜,因为它通常可以大量获得。

半监督学习通过仅需要部分标记的数据集并利用未标记的示例进行学习来提高标签效率,从而解决了这个问题。

在此示例中,我们将使用对比学习在 STL-10 半监督数据集上对编码器进行预训练,而不使用任何标签,然后使用其标记的子集对其进行微调。

对比学习

在最高层面上,对比学习的主要思想是 **以自监督的方式学习对图像增强不变的表示**。这个目标存在一个微不足道的退化解决方案:表示是恒定的,并且完全不依赖于输入图像。

对比学习通过以下方式修改目标来避免此陷阱:它将同一图像的增强版本/视图的表示拉近(收缩正样本),同时在表示空间中将不同图像推开(对比负样本)。

一种这样的对比方法是 SimCLR,它本质上识别了优化此目标所需的关键组件,并通过扩展此简单方法实现高性能。

另一种方法是 SimSiam(Keras 示例),它与 SimCLR 的主要区别在于前者不使用其损失中的任何负样本。因此,它不明确阻止退化解决方案,而是通过架构设计(使用预测网络和批处理规范化(BatchNorm)的非对称编码路径应用于最终层)隐式地避免它。

有关 SimCLR 的进一步阅读,请参阅 官方 Google AI 博客文章,有关跨视觉和语言的自监督学习的概述,请参阅 这篇博客文章。

设置

import os

os.environ["KERAS_BACKEND"] = "tensorflow"

# Make sure we are able to handle large datasets

import resource

low, high = resource.getrlimit(resource.RLIMIT_NOFILE)

resource.setrlimit(resource.RLIMIT_NOFILE, (high, high))

import math

import matplotlib.pyplot as plt

import tensorflow as tf

import tensorflow_datasets as tfds

import keras

from keras import ops

from keras import layers

超参数设置

# Dataset hyperparameters

unlabeled_dataset_size = 100000

labeled_dataset_size = 5000

image_channels = 3

# Algorithm hyperparameters

num_epochs = 20

batch_size = 525 # Corresponds to 200 steps per epoch

width = 128

temperature = 0.1

# Stronger augmentations for contrastive, weaker ones for supervised training

contrastive_augmentation = {"min_area": 0.25, "brightness": 0.6, "jitter": 0.2}

classification_augmentation = {

"min_area": 0.75,

"brightness": 0.3,

"jitter": 0.1,

}

数据集

在训练期间,我们将同时加载大量的未标记图像以及一小批标记图像。

def prepare_dataset():

# Labeled and unlabeled samples are loaded synchronously

# with batch sizes selected accordingly

steps_per_epoch = (unlabeled_dataset_size + labeled_dataset_size) // batch_size

unlabeled_batch_size = unlabeled_dataset_size // steps_per_epoch

labeled_batch_size = labeled_dataset_size // steps_per_epoch

print(

f"batch size is {unlabeled_batch_size} (unlabeled) + {labeled_batch_size} (labeled)"

)

# Turning off shuffle to lower resource usage

unlabeled_train_dataset = (

tfds.load("stl10", split="unlabelled", as_supervised=True, shuffle_files=False)

.shuffle(buffer_size=10 * unlabeled_batch_size)

.batch(unlabeled_batch_size)

)

labeled_train_dataset = (

tfds.load("stl10", split="train", as_supervised=True, shuffle_files=False)

.shuffle(buffer_size=10 * labeled_batch_size)

.batch(labeled_batch_size)

)

test_dataset = (

tfds.load("stl10", split="test", as_supervised=True)

.batch(batch_size)

.prefetch(buffer_size=tf.data.AUTOTUNE)

)

# Labeled and unlabeled datasets are zipped together

train_dataset = tf.data.Dataset.zip(

(unlabeled_train_dataset, labeled_train_dataset)

).prefetch(buffer_size=tf.data.AUTOTUNE)

return train_dataset, labeled_train_dataset, test_dataset

# Load STL10 dataset

train_dataset, labeled_train_dataset, test_dataset = prepare_dataset()

batch size is 500 (unlabeled) + 25 (labeled)

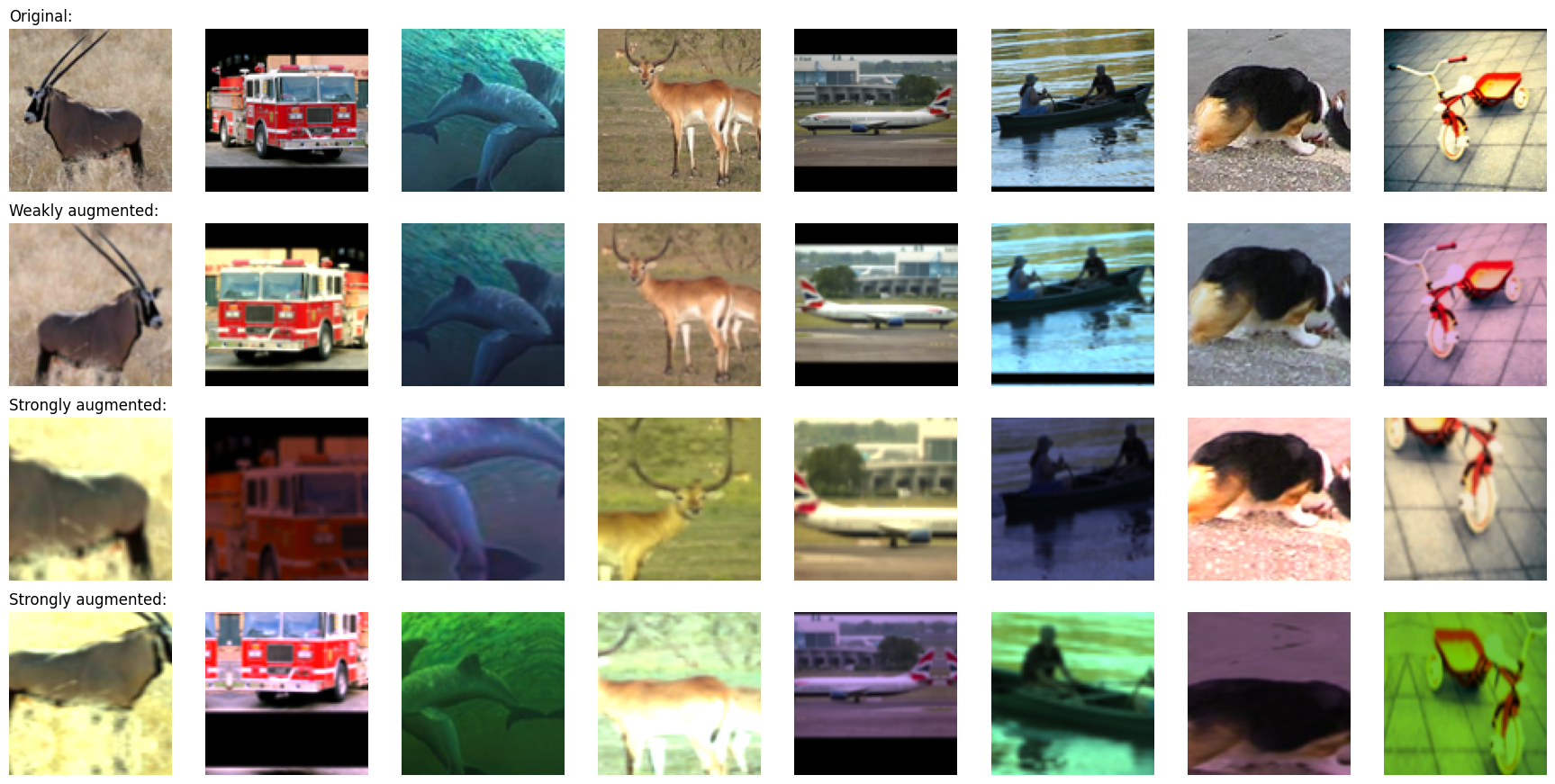

图像增强

对比学习中最重要的两个图像增强是:

- 裁剪:强制模型以相似的方式编码同一图像的不同部分,我们使用 RandomTranslation 和 RandomZoom 层来实现。

- 颜色抖动:通过扭曲颜色直方图来防止任务的平凡颜色直方图解决方案。实现此目的的原则性方法是在颜色空间中进行仿射变换。

在此示例中,我们也使用了随机水平翻转。为了进行对比学习,应用了更强的增强,同时为监督分类应用了更弱的增强,以避免在少量标记示例上过拟合。

我们将随机颜色抖动实现为自定义预处理层。使用预处理层进行数据增强具有以下两个优点:

- 数据增强将在 GPU 上分批运行,因此在 CPU 资源受限的环境(如 Colab Notebook 或个人计算机)中,训练不会受到数据管道的瓶颈。

- 部署更容易,因为数据预处理管道封装在模型中,并且在部署时无需重新实现。

# Distorts the color distibutions of images

class RandomColorAffine(layers.Layer):

def __init__(self, brightness=0, jitter=0, **kwargs):

super().__init__(**kwargs)

self.seed_generator = keras.random.SeedGenerator(1337)

self.brightness = brightness

self.jitter = jitter

def get_config(self):

config = super().get_config()

config.update({"brightness": self.brightness, "jitter": self.jitter})

return config

def call(self, images, training=True):

if training:

batch_size = ops.shape(images)[0]

# Same for all colors

brightness_scales = 1 + keras.random.uniform(

(batch_size, 1, 1, 1),

minval=-self.brightness,

maxval=self.brightness,

seed=self.seed_generator,

)

# Different for all colors

jitter_matrices = keras.random.uniform(

(batch_size, 1, 3, 3),

minval=-self.jitter,

maxval=self.jitter,

seed=self.seed_generator,

)

color_transforms = (

ops.tile(ops.expand_dims(ops.eye(3), axis=0), (batch_size, 1, 1, 1))

* brightness_scales

+ jitter_matrices

)

images = ops.clip(ops.matmul(images, color_transforms), 0, 1)

return images

# Image augmentation module

def get_augmenter(min_area, brightness, jitter):

zoom_factor = 1.0 - math.sqrt(min_area)

return keras.Sequential(

[

layers.Rescaling(1 / 255),

layers.RandomFlip("horizontal"),

layers.RandomTranslation(zoom_factor / 2, zoom_factor / 2),

layers.RandomZoom((-zoom_factor, 0.0), (-zoom_factor, 0.0)),

RandomColorAffine(brightness, jitter),

]

)

def visualize_augmentations(num_images):

# Sample a batch from a dataset

images = next(iter(train_dataset))[0][0][:num_images]

# Apply augmentations

augmented_images = zip(

images,

get_augmenter(**classification_augmentation)(images),

get_augmenter(**contrastive_augmentation)(images),

get_augmenter(**contrastive_augmentation)(images),

)

row_titles = [

"Original:",

"Weakly augmented:",

"Strongly augmented:",

"Strongly augmented:",

]

plt.figure(figsize=(num_images * 2.2, 4 * 2.2), dpi=100)

for column, image_row in enumerate(augmented_images):

for row, image in enumerate(image_row):

plt.subplot(4, num_images, row * num_images + column + 1)

plt.imshow(image)

if column == 0:

plt.title(row_titles[row], loc="left")

plt.axis("off")

plt.tight_layout()

visualize_augmentations(num_images=8)

编码器架构

# Define the encoder architecture

def get_encoder():

return keras.Sequential(

[

layers.Conv2D(width, kernel_size=3, strides=2, activation="relu"),

layers.Conv2D(width, kernel_size=3, strides=2, activation="relu"),

layers.Conv2D(width, kernel_size=3, strides=2, activation="relu"),

layers.Conv2D(width, kernel_size=3, strides=2, activation="relu"),

layers.Flatten(),

layers.Dense(width, activation="relu"),

],

name="encoder",

)

监督基线模型

使用随机初始化训练一个基线监督模型。

# Baseline supervised training with random initialization

baseline_model = keras.Sequential(

[

get_augmenter(**classification_augmentation),

get_encoder(),

layers.Dense(10),

],

name="baseline_model",

)

baseline_model.compile(

optimizer=keras.optimizers.Adam(),

loss=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=[keras.metrics.SparseCategoricalAccuracy(name="acc")],

)

baseline_history = baseline_model.fit(

labeled_train_dataset, epochs=num_epochs, validation_data=test_dataset

)

print(

"Maximal validation accuracy: {:.2f}%".format(

max(baseline_history.history["val_acc"]) * 100

)

)

Epoch 1/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 9s 25ms/step - acc: 0.2031 - loss: 2.1576 - val_acc: 0.3234 - val_loss: 1.7719

Epoch 2/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.3476 - loss: 1.7792 - val_acc: 0.4042 - val_loss: 1.5626

Epoch 3/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.4060 - loss: 1.6054 - val_acc: 0.4319 - val_loss: 1.4832

Epoch 4/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 18ms/step - acc: 0.4347 - loss: 1.5052 - val_acc: 0.4570 - val_loss: 1.4428

Epoch 5/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 18ms/step - acc: 0.4600 - loss: 1.4546 - val_acc: 0.4765 - val_loss: 1.3977

Epoch 6/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.4754 - loss: 1.4015 - val_acc: 0.4740 - val_loss: 1.4082

Epoch 7/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.4901 - loss: 1.3589 - val_acc: 0.4761 - val_loss: 1.4061

Epoch 8/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.5110 - loss: 1.2793 - val_acc: 0.5247 - val_loss: 1.3026

Epoch 9/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.5298 - loss: 1.2765 - val_acc: 0.5138 - val_loss: 1.3286

Epoch 10/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.5514 - loss: 1.2078 - val_acc: 0.5543 - val_loss: 1.2227

Epoch 11/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.5520 - loss: 1.1851 - val_acc: 0.5446 - val_loss: 1.2709

Epoch 12/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.5851 - loss: 1.1368 - val_acc: 0.5725 - val_loss: 1.1944

Epoch 13/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 18ms/step - acc: 0.5738 - loss: 1.1411 - val_acc: 0.5685 - val_loss: 1.1974

Epoch 14/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 21ms/step - acc: 0.6078 - loss: 1.0308 - val_acc: 0.5899 - val_loss: 1.1769

Epoch 15/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 18ms/step - acc: 0.6284 - loss: 1.0386 - val_acc: 0.5863 - val_loss: 1.1742

Epoch 16/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 18ms/step - acc: 0.6450 - loss: 0.9773 - val_acc: 0.5849 - val_loss: 1.1993

Epoch 17/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6547 - loss: 0.9555 - val_acc: 0.5683 - val_loss: 1.2424

Epoch 18/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6593 - loss: 0.9084 - val_acc: 0.5990 - val_loss: 1.1458

Epoch 19/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6672 - loss: 0.9267 - val_acc: 0.5685 - val_loss: 1.2758

Epoch 20/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6824 - loss: 0.8863 - val_acc: 0.5969 - val_loss: 1.2035

Maximal validation accuracy: 59.90%

用于对比预训练的自监督模型

我们使用对比损失在未标记图像上预训练编码器。在编码器顶部附加了一个非线性投影头,因为它提高了编码器的表示质量。

我们使用 InfoNCE/NT-Xent/N-pairs 损失,可以解释如下:

- 我们将批次中的每张图像视为其自己的类别。

- 然后,我们为每个“类别”有两个示例(一对增强视图)。

- 将每个视图的表示与每个可能对的表示(两个增强版本)进行比较。

- 我们将缩放温度的表示之间的余弦相似度作为 logits。

- 最后,我们使用分类交叉熵作为“分类”损失。

用于监控预训练性能的以下两个指标:

- 对比准确率(SimCLR 表 5):自监督指标,表示一个图像的表示比其不同增强版本的表示更相似于当前批次中任何其他图像的表示的情况的比例。即使没有标记的示例,自监督指标也可用于超参数调优。

- 线性探测准确率:线性探测是评估自监督分类器的常用指标。它计算在编码器特征之上训练的逻辑回归分类器的准确率。在我们的例子中,这是通过在冻结编码器之上训练单个密集层来完成的。请注意,与在预训练阶段之后训练分类器的传统方法不同,在此示例中,我们在预训练期间训练它。这可能会略微降低其准确率,但这样我们可以监控其值,这有助于实验和调试。

另一个广泛使用的监督指标是 KNN 准确率,它是编码器特征之上训练的 KNN 分类器的准确率,在此示例中未实现。

# Define the contrastive model with model-subclassing

class ContrastiveModel(keras.Model):

def __init__(self):

super().__init__()

self.temperature = temperature

self.contrastive_augmenter = get_augmenter(**contrastive_augmentation)

self.classification_augmenter = get_augmenter(**classification_augmentation)

self.encoder = get_encoder()

# Non-linear MLP as projection head

self.projection_head = keras.Sequential(

[

keras.Input(shape=(width,)),

layers.Dense(width, activation="relu"),

layers.Dense(width),

],

name="projection_head",

)

# Single dense layer for linear probing

self.linear_probe = keras.Sequential(

[layers.Input(shape=(width,)), layers.Dense(10)],

name="linear_probe",

)

self.encoder.summary()

self.projection_head.summary()

self.linear_probe.summary()

def compile(self, contrastive_optimizer, probe_optimizer, **kwargs):

super().compile(**kwargs)

self.contrastive_optimizer = contrastive_optimizer

self.probe_optimizer = probe_optimizer

# self.contrastive_loss will be defined as a method

self.probe_loss = keras.losses.SparseCategoricalCrossentropy(from_logits=True)

self.contrastive_loss_tracker = keras.metrics.Mean(name="c_loss")

self.contrastive_accuracy = keras.metrics.SparseCategoricalAccuracy(

name="c_acc"

)

self.probe_loss_tracker = keras.metrics.Mean(name="p_loss")

self.probe_accuracy = keras.metrics.SparseCategoricalAccuracy(name="p_acc")

@property

def metrics(self):

return [

self.contrastive_loss_tracker,

self.contrastive_accuracy,

self.probe_loss_tracker,

self.probe_accuracy,

]

def contrastive_loss(self, projections_1, projections_2):

# InfoNCE loss (information noise-contrastive estimation)

# NT-Xent loss (normalized temperature-scaled cross entropy)

# Cosine similarity: the dot product of the l2-normalized feature vectors

projections_1 = ops.normalize(projections_1, axis=1)

projections_2 = ops.normalize(projections_2, axis=1)

similarities = (

ops.matmul(projections_1, ops.transpose(projections_2)) / self.temperature

)

# The similarity between the representations of two augmented views of the

# same image should be higher than their similarity with other views

batch_size = ops.shape(projections_1)[0]

contrastive_labels = ops.arange(batch_size)

self.contrastive_accuracy.update_state(contrastive_labels, similarities)

self.contrastive_accuracy.update_state(

contrastive_labels, ops.transpose(similarities)

)

# The temperature-scaled similarities are used as logits for cross-entropy

# a symmetrized version of the loss is used here

loss_1_2 = keras.losses.sparse_categorical_crossentropy(

contrastive_labels, similarities, from_logits=True

)

loss_2_1 = keras.losses.sparse_categorical_crossentropy(

contrastive_labels, ops.transpose(similarities), from_logits=True

)

return (loss_1_2 + loss_2_1) / 2

def train_step(self, data):

(unlabeled_images, _), (labeled_images, labels) = data

# Both labeled and unlabeled images are used, without labels

images = ops.concatenate((unlabeled_images, labeled_images), axis=0)

# Each image is augmented twice, differently

augmented_images_1 = self.contrastive_augmenter(images, training=True)

augmented_images_2 = self.contrastive_augmenter(images, training=True)

with tf.GradientTape() as tape:

features_1 = self.encoder(augmented_images_1, training=True)

features_2 = self.encoder(augmented_images_2, training=True)

# The representations are passed through a projection mlp

projections_1 = self.projection_head(features_1, training=True)

projections_2 = self.projection_head(features_2, training=True)

contrastive_loss = self.contrastive_loss(projections_1, projections_2)

gradients = tape.gradient(

contrastive_loss,

self.encoder.trainable_weights + self.projection_head.trainable_weights,

)

self.contrastive_optimizer.apply_gradients(

zip(

gradients,

self.encoder.trainable_weights + self.projection_head.trainable_weights,

)

)

self.contrastive_loss_tracker.update_state(contrastive_loss)

# Labels are only used in evalutation for an on-the-fly logistic regression

preprocessed_images = self.classification_augmenter(

labeled_images, training=True

)

with tf.GradientTape() as tape:

# the encoder is used in inference mode here to avoid regularization

# and updating the batch normalization paramers if they are used

features = self.encoder(preprocessed_images, training=False)

class_logits = self.linear_probe(features, training=True)

probe_loss = self.probe_loss(labels, class_logits)

gradients = tape.gradient(probe_loss, self.linear_probe.trainable_weights)

self.probe_optimizer.apply_gradients(

zip(gradients, self.linear_probe.trainable_weights)

)

self.probe_loss_tracker.update_state(probe_loss)

self.probe_accuracy.update_state(labels, class_logits)

return {m.name: m.result() for m in self.metrics}

def test_step(self, data):

labeled_images, labels = data

# For testing the components are used with a training=False flag

preprocessed_images = self.classification_augmenter(

labeled_images, training=False

)

features = self.encoder(preprocessed_images, training=False)

class_logits = self.linear_probe(features, training=False)

probe_loss = self.probe_loss(labels, class_logits)

self.probe_loss_tracker.update_state(probe_loss)

self.probe_accuracy.update_state(labels, class_logits)

# Only the probe metrics are logged at test time

return {m.name: m.result() for m in self.metrics[2:]}

# Contrastive pretraining

pretraining_model = ContrastiveModel()

pretraining_model.compile(

contrastive_optimizer=keras.optimizers.Adam(),

probe_optimizer=keras.optimizers.Adam(),

)

pretraining_history = pretraining_model.fit(

train_dataset, epochs=num_epochs, validation_data=test_dataset

)

print(

"Maximal validation accuracy: {:.2f}%".format(

max(pretraining_history.history["val_p_acc"]) * 100

)

)

Model: "encoder"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━┓ ┃ Layer (type) ┃ Output Shape ┃ Param # ┃ ┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━┩ │ conv2d_4 (Conv2D) │ ? │ 0 │ │ │ │ (unbuilt) │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ conv2d_5 (Conv2D) │ ? │ 0 │ │ │ │ (unbuilt) │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ conv2d_6 (Conv2D) │ ? │ 0 │ │ │ │ (unbuilt) │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ conv2d_7 (Conv2D) │ ? │ 0 │ │ │ │ (unbuilt) │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ flatten_1 (Flatten) │ ? │ 0 │ │ │ │ (unbuilt) │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ dense_2 (Dense) │ ? │ 0 │ │ │ │ (unbuilt) │ └─────────────────────────────────┴───────────────────────────┴────────────┘

Total params: 0 (0.00 B)

Trainable params: 0 (0.00 B)

Non-trainable params: 0 (0.00 B)

Model: "projection_head"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━┓ ┃ Layer (type) ┃ Output Shape ┃ Param # ┃ ┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━┩ │ dense_3 (Dense) │ (None, 128) │ 16,512 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ dense_4 (Dense) │ (None, 128) │ 16,512 │ └─────────────────────────────────┴───────────────────────────┴────────────┘

Total params: 33,024 (129.00 KB)

Trainable params: 33,024 (129.00 KB)

Non-trainable params: 0 (0.00 B)

Model: "linear_probe"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━┓ ┃ Layer (type) ┃ Output Shape ┃ Param # ┃ ┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━┩ │ dense_5 (Dense) │ (None, 10) │ 1,290 │ └─────────────────────────────────┴───────────────────────────┴────────────┘

Total params: 1,290 (5.04 KB)

Trainable params: 1,290 (5.04 KB)

Non-trainable params: 0 (0.00 B)

Epoch 1/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 34s 134ms/step - c_acc: 0.0880 - c_loss: 5.2606 - p_acc: 0.1326 - p_loss: 2.2726 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.2579 - val_p_loss: 2.0671

Epoch 2/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 139ms/step - c_acc: 0.2808 - c_loss: 3.6233 - p_acc: 0.2956 - p_loss: 2.0228 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.3440 - val_p_loss: 1.9242

Epoch 3/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 28s 136ms/step - c_acc: 0.4097 - c_loss: 2.9369 - p_acc: 0.3671 - p_loss: 1.8674 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.3876 - val_p_loss: 1.7757

Epoch 4/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 30s 142ms/step - c_acc: 0.4893 - c_loss: 2.5707 - p_acc: 0.3957 - p_loss: 1.7490 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.3960 - val_p_loss: 1.7002

Epoch 5/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 28s 136ms/step - c_acc: 0.5458 - c_loss: 2.3342 - p_acc: 0.4274 - p_loss: 1.6608 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.4374 - val_p_loss: 1.6145

Epoch 6/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 140ms/step - c_acc: 0.5949 - c_loss: 2.1179 - p_acc: 0.4410 - p_loss: 1.5812 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.4444 - val_p_loss: 1.5439

Epoch 7/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 28s 135ms/step - c_acc: 0.6273 - c_loss: 1.9861 - p_acc: 0.4633 - p_loss: 1.5076 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.4695 - val_p_loss: 1.5056

Epoch 8/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 139ms/step - c_acc: 0.6566 - c_loss: 1.8668 - p_acc: 0.4817 - p_loss: 1.4601 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.4790 - val_p_loss: 1.4566

Epoch 9/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 28s 135ms/step - c_acc: 0.6726 - c_loss: 1.7938 - p_acc: 0.4885 - p_loss: 1.4136 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.4933 - val_p_loss: 1.4163

Epoch 10/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 139ms/step - c_acc: 0.6931 - c_loss: 1.7210 - p_acc: 0.4954 - p_loss: 1.3663 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5140 - val_p_loss: 1.3677

Epoch 11/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 137ms/step - c_acc: 0.7055 - c_loss: 1.6619 - p_acc: 0.5210 - p_loss: 1.3376 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5155 - val_p_loss: 1.3573

Epoch 12/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 30s 145ms/step - c_acc: 0.7215 - c_loss: 1.6112 - p_acc: 0.5264 - p_loss: 1.2920 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5232 - val_p_loss: 1.3337

Epoch 13/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 31s 146ms/step - c_acc: 0.7279 - c_loss: 1.5749 - p_acc: 0.5388 - p_loss: 1.2570 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5217 - val_p_loss: 1.3155

Epoch 14/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 140ms/step - c_acc: 0.7435 - c_loss: 1.5196 - p_acc: 0.5505 - p_loss: 1.2507 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5460 - val_p_loss: 1.2640

Epoch 15/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 40s 135ms/step - c_acc: 0.7477 - c_loss: 1.4979 - p_acc: 0.5653 - p_loss: 1.2188 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5594 - val_p_loss: 1.2351

Epoch 16/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 139ms/step - c_acc: 0.7598 - c_loss: 1.4463 - p_acc: 0.5590 - p_loss: 1.1917 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5551 - val_p_loss: 1.2411

Epoch 17/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 28s 135ms/step - c_acc: 0.7633 - c_loss: 1.4271 - p_acc: 0.5775 - p_loss: 1.1731 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5502 - val_p_loss: 1.2428

Epoch 18/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 140ms/step - c_acc: 0.7666 - c_loss: 1.4246 - p_acc: 0.5752 - p_loss: 1.1805 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5633 - val_p_loss: 1.2167

Epoch 19/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 28s 135ms/step - c_acc: 0.7708 - c_loss: 1.3928 - p_acc: 0.5814 - p_loss: 1.1677 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5665 - val_p_loss: 1.2191

Epoch 20/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 29s 140ms/step - c_acc: 0.7806 - c_loss: 1.3733 - p_acc: 0.5836 - p_loss: 1.1442 - val_c_acc: 0.0000e+00 - val_c_loss: 0.0000e+00 - val_p_acc: 0.5640 - val_p_loss: 1.2172

Maximal validation accuracy: 56.65%

预训练编码器的监督微调

然后,我们通过在其顶部附加一个随机初始化的全连接分类层,在标记的示例上微调编码器。

# Supervised finetuning of the pretrained encoder

finetuning_model = keras.Sequential(

[

get_augmenter(**classification_augmentation),

pretraining_model.encoder,

layers.Dense(10),

],

name="finetuning_model",

)

finetuning_model.compile(

optimizer=keras.optimizers.Adam(),

loss=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=[keras.metrics.SparseCategoricalAccuracy(name="acc")],

)

finetuning_history = finetuning_model.fit(

labeled_train_dataset, epochs=num_epochs, validation_data=test_dataset

)

print(

"Maximal validation accuracy: {:.2f}%".format(

max(finetuning_history.history["val_acc"]) * 100

)

)

Epoch 1/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 5s 18ms/step - acc: 0.2104 - loss: 2.0930 - val_acc: 0.4017 - val_loss: 1.5433

Epoch 2/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.4037 - loss: 1.5791 - val_acc: 0.4544 - val_loss: 1.4250

Epoch 3/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.4639 - loss: 1.4161 - val_acc: 0.5266 - val_loss: 1.2958

Epoch 4/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.5438 - loss: 1.2686 - val_acc: 0.5655 - val_loss: 1.1711

Epoch 5/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.5678 - loss: 1.1746 - val_acc: 0.5775 - val_loss: 1.1670

Epoch 6/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6096 - loss: 1.1071 - val_acc: 0.6034 - val_loss: 1.1400

Epoch 7/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6242 - loss: 1.0413 - val_acc: 0.6235 - val_loss: 1.0756

Epoch 8/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6284 - loss: 1.0264 - val_acc: 0.6030 - val_loss: 1.1048

Epoch 9/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6491 - loss: 0.9706 - val_acc: 0.5770 - val_loss: 1.2818

Epoch 10/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.6754 - loss: 0.9104 - val_acc: 0.6119 - val_loss: 1.1087

Epoch 11/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 20ms/step - acc: 0.6620 - loss: 0.8855 - val_acc: 0.6323 - val_loss: 1.0526

Epoch 12/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 19ms/step - acc: 0.7060 - loss: 0.8179 - val_acc: 0.6406 - val_loss: 1.0565

Epoch 13/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 3s 17ms/step - acc: 0.7252 - loss: 0.7796 - val_acc: 0.6135 - val_loss: 1.1273

Epoch 14/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.7176 - loss: 0.7935 - val_acc: 0.6292 - val_loss: 1.1028

Epoch 15/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.7322 - loss: 0.7471 - val_acc: 0.6266 - val_loss: 1.1313

Epoch 16/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.7400 - loss: 0.7218 - val_acc: 0.6332 - val_loss: 1.1064

Epoch 17/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.7490 - loss: 0.6968 - val_acc: 0.6532 - val_loss: 1.0112

Epoch 18/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.7491 - loss: 0.6879 - val_acc: 0.6403 - val_loss: 1.1083

Epoch 19/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 4s 17ms/step - acc: 0.7802 - loss: 0.6504 - val_acc: 0.6479 - val_loss: 1.0548

Epoch 20/20

200/200 ━━━━━━━━━━━━━━━━━━━━ 3s 17ms/step - acc: 0.7800 - loss: 0.6234 - val_acc: 0.6409 - val_loss: 1.0998

Maximal validation accuracy: 65.32%

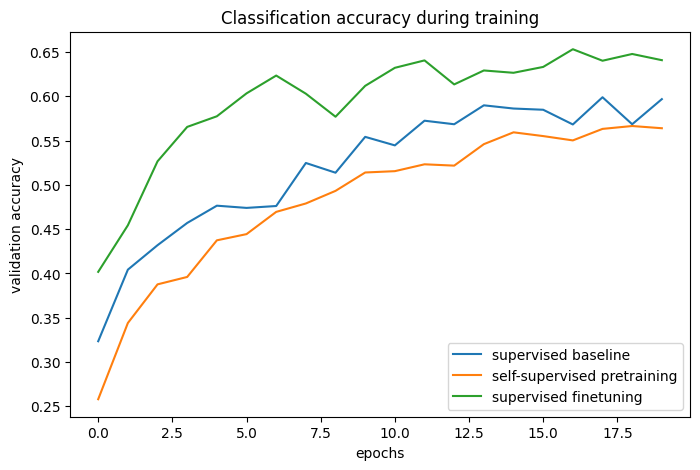

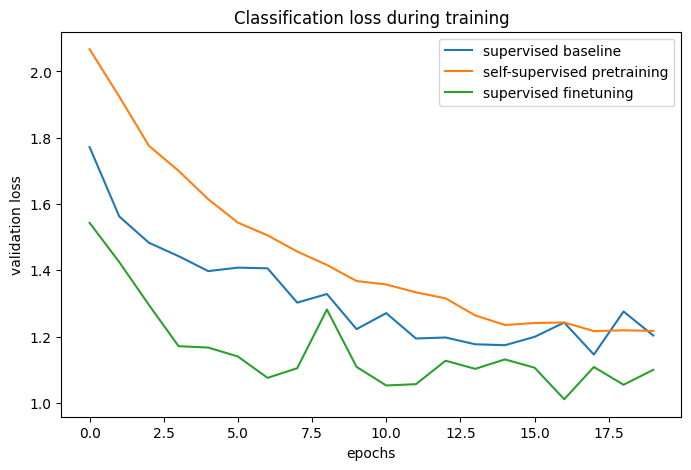

与基线的比较

# The classification accuracies of the baseline and the pretraining + finetuning process:

def plot_training_curves(pretraining_history, finetuning_history, baseline_history):

for metric_key, metric_name in zip(["acc", "loss"], ["accuracy", "loss"]):

plt.figure(figsize=(8, 5), dpi=100)

plt.plot(

baseline_history.history[f"val_{metric_key}"],

label="supervised baseline",

)

plt.plot(

pretraining_history.history[f"val_p_{metric_key}"],

label="self-supervised pretraining",

)

plt.plot(

finetuning_history.history[f"val_{metric_key}"],

label="supervised finetuning",

)

plt.legend()

plt.title(f"Classification {metric_name} during training")

plt.xlabel("epochs")

plt.ylabel(f"validation {metric_name}")

plot_training_curves(pretraining_history, finetuning_history, baseline_history)

通过比较训练曲线,我们可以看到在使用对比预训练时,可以达到更高的验证准确率,并伴随较低的验证损失,这意味着预训练的网络在仅看到少量标记示例时能够更好地泛化。

进一步改进

架构

原始论文中的实验表明,增加模型的宽度和深度比监督学习更能提高性能。此外,使用 ResNet-50 编码器在文献中相当普遍。但请记住,更强大的模型不仅会增加训练时间,还需要更多内存,并会限制您可以使用的最大批量大小。

据 报道,有时 BatchNorm 层可能会降低性能,因为它会在样本之间引入批内依赖性,因此在此示例中未使用它们。然而,在我的实验中,使用 BatchNorm,尤其是在投影头中,可以提高性能。

超参数

此示例中使用的超参数已针对此任务和架构手动调整。因此,在不更改它们的情况下,进一步的超参数调优只能带来边际收益。

但是,对于不同的任务或模型架构,这些将需要调整,因此以下是我对最重要的几点的笔记:

- 批量大小:由于目标可以(粗略地)解释为对图像批次的分类,因此批量大小实际上比通常更重要的超参数。越高越好。

- 温度:温度定义了交叉熵损失中使用的 softmax 分布的“软度”,并且是一个重要的超参数。较低的值通常会导致更高的对比准确率。最近的一个技巧(在 ALIGN 中)是学习温度的值(这可以通过将其定义为 tf.Variable 并对其应用梯度来完成)。尽管这提供了一个良好的基线值,但在我的实验中,学习到的温度略低于最佳值,因为它相对于对比损失进行了优化,而对比损失并不是表示质量的完美代理。

- 图像增强强度:在预训练期间,更强的增强会增加任务的难度,但在某一点之后,太强的增强会降低性能。在微调期间,更强的增强可以减少过拟合,而在我的经验中,太强的增强会降低预训练带来的性能提升。整个数据增强管道可以看作是算法的一个重要超参数,在 此存储库 中可以找到 Keras 中其他自定义图像增强层的实现。

- 学习率调度:此处使用恒定调度,但在文献中,使用 余弦衰减调度 也很常见,这可以进一步提高性能。

- 优化器:此示例使用 Adam,因为它在默认参数下性能良好。带动量的 SGD 需要更多调整,但可能会略微提高性能。

相关工作

其他实例级(图像级)对比学习方法

- MoCo(v2,v3):还使用动量编码器,其权重是目标编码器的指数移动平均。

- SwAV:使用聚类而不是成对比较。

- BarlowTwins:使用基于互相关性的目标而不是成对比较。

此 存储库 中包含 Keras 对 **MoCo** 和 **BarlowTwins** 的实现,其中包括一个 Colab Notebook。

还有一个新的工作方向,它们优化了类似的目标,但没有使用任何负样本:

根据我的经验,这些方法更容易出问题(它们可能会退化为恒定表示,我无法在此编码器架构中使用它们来使其正常工作)。尽管它们通常更依赖于 模型 架构,但它们可以在较小的批量大小下提高性能。

您可以使用托管在 Hugging Face Hub 上的训练模型,并在 Hugging Face Spaces 上尝试演示。