CT扫描的 3D 图像分类

作者: Hasib Zunair

创建日期 2020/09/23

最后修改日期 2024/01/11

描述: 训练一个 3D 卷积神经网络来预测肺炎的存在。

简介

本示例将展示构建一个 3D 卷积神经网络(CNN)来预测计算机断层扫描(CT)中病毒性肺炎存在性的步骤。2D CNN 通常用于处理 RGB 图像(3 个通道)。3D CNN 是其 3D 等效形式:它将 3D 体积或一系列 2D 帧(例如 CT 扫描中的切片)作为输入,3D CNN 是学习体积数据表示的强大模型。

参考文献

- 关于不同 3D 数据表示的深度学习进展调查

- VoxNet:一种用于实时物体识别的 3D 卷积神经网络

- FusionNet:使用多种数据表示的 3D 对象分类

- 用于结核病预测的 CT 扫描 3D CNN 统一技术

设置

import os

import zipfile

import numpy as np

import tensorflow as tf # for data preprocessing

import keras

from keras import layers

下载 MosMedData:带有 COVID-19 相关发现的胸部 CT 扫描

在本示例中,我们使用了 MosMedData:带有 COVID-19 相关发现的胸部 CT 扫描 的一个子集。该数据集包含带有 COVID-19 相关发现以及没有这些发现的肺部 CT 扫描。

我们将使用 CT 扫描相关的放射学发现作为标签来构建一个分类器,以预测病毒性肺炎的存在。因此,这是一个二元分类问题。

# Download url of normal CT scans.

url = "https://github.com/hasibzunair/3D-image-classification-tutorial/releases/download/v0.2/CT-0.zip"

filename = os.path.join(os.getcwd(), "CT-0.zip")

keras.utils.get_file(filename, url)

# Download url of abnormal CT scans.

url = "https://github.com/hasibzunair/3D-image-classification-tutorial/releases/download/v0.2/CT-23.zip"

filename = os.path.join(os.getcwd(), "CT-23.zip")

keras.utils.get_file(filename, url)

# Make a directory to store the data.

os.makedirs("MosMedData")

# Unzip data in the newly created directory.

with zipfile.ZipFile("CT-0.zip", "r") as z_fp:

z_fp.extractall("./MosMedData/")

with zipfile.ZipFile("CT-23.zip", "r") as z_fp:

z_fp.extractall("./MosMedData/")

Downloading data from https://github.com/hasibzunair/3D-image-classification-tutorial/releases/download/v0.2/CT-0.zip

1045162547/1045162547 ━━━━━━━━━━━━━━━━━━━━ 4s 0us/step

加载数据和预处理

文件以 .nii 扩展名的 Nifti 格式提供。要读取扫描,我们使用 nibabel 包。您可以通过 pip install nibabel 安装该包。CT 扫描以亨氏单位 (HU) 存储原始体素强度。在此数据集中,它们范围从 -1024 到 2000 以上。400 以上是不同放射强度的骨骼,因此将其用作上限。通常使用 -1000 到 400 之间的阈值来标准化 CT 扫描。

为了处理数据,我们执行以下操作:

- 我们首先将体积旋转 90 度,以固定方向

- 我们将 HU 值缩放到 0 到 1 之间。

- 我们调整宽度、高度和深度的尺寸。

这里我们定义了几个辅助函数来处理数据。这些函数将在构建训练集和验证集时使用。

import nibabel as nib

from scipy import ndimage

def read_nifti_file(filepath):

"""Read and load volume"""

# Read file

scan = nib.load(filepath)

# Get raw data

scan = scan.get_fdata()

return scan

def normalize(volume):

"""Normalize the volume"""

min = -1000

max = 400

volume[volume < min] = min

volume[volume > max] = max

volume = (volume - min) / (max - min)

volume = volume.astype("float32")

return volume

def resize_volume(img):

"""Resize across z-axis"""

# Set the desired depth

desired_depth = 64

desired_width = 128

desired_height = 128

# Get current depth

current_depth = img.shape[-1]

current_width = img.shape[0]

current_height = img.shape[1]

# Compute depth factor

depth = current_depth / desired_depth

width = current_width / desired_width

height = current_height / desired_height

depth_factor = 1 / depth

width_factor = 1 / width

height_factor = 1 / height

# Rotate

img = ndimage.rotate(img, 90, reshape=False)

# Resize across z-axis

img = ndimage.zoom(img, (width_factor, height_factor, depth_factor), order=1)

return img

def process_scan(path):

"""Read and resize volume"""

# Read scan

volume = read_nifti_file(path)

# Normalize

volume = normalize(volume)

# Resize width, height and depth

volume = resize_volume(volume)

return volume

让我们从类别目录中读取 CT 扫描的路径。

# Folder "CT-0" consist of CT scans having normal lung tissue,

# no CT-signs of viral pneumonia.

normal_scan_paths = [

os.path.join(os.getcwd(), "MosMedData/CT-0", x)

for x in os.listdir("MosMedData/CT-0")

]

# Folder "CT-23" consist of CT scans having several ground-glass opacifications,

# involvement of lung parenchyma.

abnormal_scan_paths = [

os.path.join(os.getcwd(), "MosMedData/CT-23", x)

for x in os.listdir("MosMedData/CT-23")

]

print("CT scans with normal lung tissue: " + str(len(normal_scan_paths)))

print("CT scans with abnormal lung tissue: " + str(len(abnormal_scan_paths)))

CT scans with normal lung tissue: 100

CT scans with abnormal lung tissue: 100

构建训练集和验证集

从类别目录中读取扫描并分配标签。将扫描下采样到 128x128x64 的形状。将原始 HU 值重新缩放到 0 到 1 的范围。最后,将数据集分割成训练集和验证集。

# Read and process the scans.

# Each scan is resized across height, width, and depth and rescaled.

abnormal_scans = np.array([process_scan(path) for path in abnormal_scan_paths])

normal_scans = np.array([process_scan(path) for path in normal_scan_paths])

# For the CT scans having presence of viral pneumonia

# assign 1, for the normal ones assign 0.

abnormal_labels = np.array([1 for _ in range(len(abnormal_scans))])

normal_labels = np.array([0 for _ in range(len(normal_scans))])

# Split data in the ratio 70-30 for training and validation.

x_train = np.concatenate((abnormal_scans[:70], normal_scans[:70]), axis=0)

y_train = np.concatenate((abnormal_labels[:70], normal_labels[:70]), axis=0)

x_val = np.concatenate((abnormal_scans[70:], normal_scans[70:]), axis=0)

y_val = np.concatenate((abnormal_labels[70:], normal_labels[70:]), axis=0)

print(

"Number of samples in train and validation are %d and %d."

% (x_train.shape[0], x_val.shape[0])

)

Number of samples in train and validation are 140 and 60.

数据增强

CT 扫描还在训练期间通过随机角度旋转进行增强。由于数据存储在形状为 (samples, height, width, depth) 的秩为 3 的张量中,因此我们在轴 4 添加一个大小为 1 的维度,以便能够对数据执行 3D 卷积。因此,新的形状为 (samples, height, width, depth, 1)。存在各种预处理和增强技术,本示例展示了一些简单的技术以供入门。

import random

from scipy import ndimage

def rotate(volume):

"""Rotate the volume by a few degrees"""

def scipy_rotate(volume):

# define some rotation angles

angles = [-20, -10, -5, 5, 10, 20]

# pick angles at random

angle = random.choice(angles)

# rotate volume

volume = ndimage.rotate(volume, angle, reshape=False)

volume[volume < 0] = 0

volume[volume > 1] = 1

return volume

augmented_volume = tf.numpy_function(scipy_rotate, [volume], tf.float32)

return augmented_volume

def train_preprocessing(volume, label):

"""Process training data by rotating and adding a channel."""

# Rotate volume

volume = rotate(volume)

volume = tf.expand_dims(volume, axis=3)

return volume, label

def validation_preprocessing(volume, label):

"""Process validation data by only adding a channel."""

volume = tf.expand_dims(volume, axis=3)

return volume, label

在定义训练和验证数据加载器时,训练数据通过一个增强函数,该函数会随机以不同角度旋转体积。请注意,训练和验证数据都已重新缩放到 0 到 1 之间。

# Define data loaders.

train_loader = tf.data.Dataset.from_tensor_slices((x_train, y_train))

validation_loader = tf.data.Dataset.from_tensor_slices((x_val, y_val))

batch_size = 2

# Augment the on the fly during training.

train_dataset = (

train_loader.shuffle(len(x_train))

.map(train_preprocessing)

.batch(batch_size)

.prefetch(2)

)

# Only rescale.

validation_dataset = (

validation_loader.shuffle(len(x_val))

.map(validation_preprocessing)

.batch(batch_size)

.prefetch(2)

)

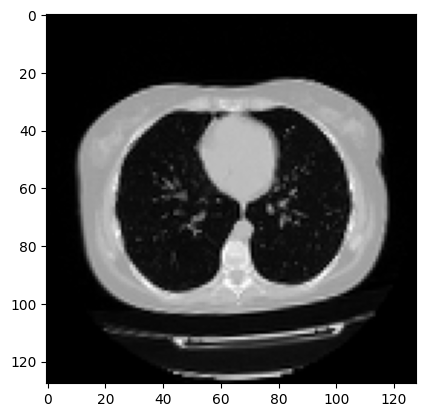

可视化增强后的 CT 扫描。

import matplotlib.pyplot as plt

data = train_dataset.take(1)

images, labels = list(data)[0]

images = images.numpy()

image = images[0]

print("Dimension of the CT scan is:", image.shape)

plt.imshow(np.squeeze(image[:, :, 30]), cmap="gray")

Dimension of the CT scan is: (128, 128, 64, 1)

<matplotlib.image.AxesImage at 0x7fc5b9900d50>

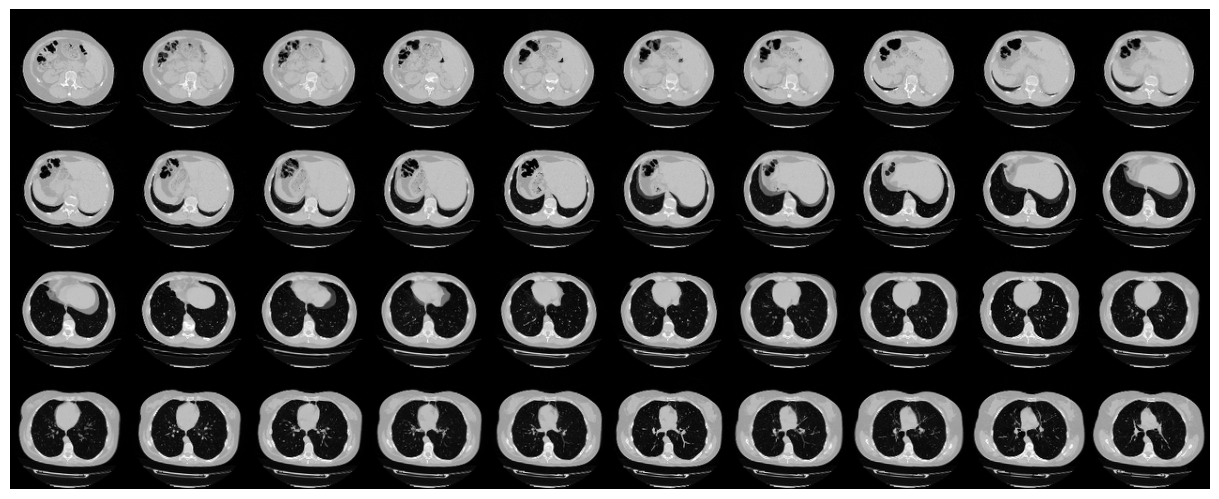

由于 CT 扫描包含许多切片,让我们可视化一个切片蒙太奇。

def plot_slices(num_rows, num_columns, width, height, data):

"""Plot a montage of 20 CT slices"""

data = np.rot90(np.array(data))

data = np.transpose(data)

data = np.reshape(data, (num_rows, num_columns, width, height))

rows_data, columns_data = data.shape[0], data.shape[1]

heights = [slc[0].shape[0] for slc in data]

widths = [slc.shape[1] for slc in data[0]]

fig_width = 12.0

fig_height = fig_width * sum(heights) / sum(widths)

f, axarr = plt.subplots(

rows_data,

columns_data,

figsize=(fig_width, fig_height),

gridspec_kw={"height_ratios": heights},

)

for i in range(rows_data):

for j in range(columns_data):

axarr[i, j].imshow(data[i][j], cmap="gray")

axarr[i, j].axis("off")

plt.subplots_adjust(wspace=0, hspace=0, left=0, right=1, bottom=0, top=1)

plt.show()

# Visualize montage of slices.

# 4 rows and 10 columns for 100 slices of the CT scan.

plot_slices(4, 10, 128, 128, image[:, :, :40])

定义一个 3D 卷积神经网络

为了使模型更容易理解,我们将它组织成块。本示例中使用的 3D CNN 架构基于这篇论文。

def get_model(width=128, height=128, depth=64):

"""Build a 3D convolutional neural network model."""

inputs = keras.Input((width, height, depth, 1))

x = layers.Conv3D(filters=64, kernel_size=3, activation="relu")(inputs)

x = layers.MaxPool3D(pool_size=2)(x)

x = layers.BatchNormalization()(x)

x = layers.Conv3D(filters=64, kernel_size=3, activation="relu")(x)

x = layers.MaxPool3D(pool_size=2)(x)

x = layers.BatchNormalization()(x)

x = layers.Conv3D(filters=128, kernel_size=3, activation="relu")(x)

x = layers.MaxPool3D(pool_size=2)(x)

x = layers.BatchNormalization()(x)

x = layers.Conv3D(filters=256, kernel_size=3, activation="relu")(x)

x = layers.MaxPool3D(pool_size=2)(x)

x = layers.BatchNormalization()(x)

x = layers.GlobalAveragePooling3D()(x)

x = layers.Dense(units=512, activation="relu")(x)

x = layers.Dropout(0.3)(x)

outputs = layers.Dense(units=1, activation="sigmoid")(x)

# Define the model.

model = keras.Model(inputs, outputs, name="3dcnn")

return model

# Build model.

model = get_model(width=128, height=128, depth=64)

model.summary()

Model: "3dcnn"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━┓ ┃ Layer (type) ┃ Output Shape ┃ Param # ┃ ┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━┩ │ input_layer (InputLayer) │ (None, 128, 128, 64, 1) │ 0 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ conv3d (Conv3D) │ (None, 126, 126, 62, 64) │ 1,792 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ max_pooling3d (MaxPooling3D) │ (None, 63, 63, 31, 64) │ 0 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ batch_normalization │ (None, 63, 63, 31, 64) │ 256 │ │ (BatchNormalization) │ │ │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ conv3d_1 (Conv3D) │ (None, 61, 61, 29, 64) │ 110,656 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ max_pooling3d_1 (MaxPooling3D) │ (None, 30, 30, 14, 64) │ 0 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ batch_normalization_1 │ (None, 30, 30, 14, 64) │ 256 │ │ (BatchNormalization) │ │ │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ conv3d_2 (Conv3D) │ (None, 28, 28, 12, 128) │ 221,312 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ max_pooling3d_2 (MaxPooling3D) │ (None, 14, 14, 6, 128) │ 0 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ batch_normalization_2 │ (None, 14, 14, 6, 128) │ 512 │ │ (BatchNormalization) │ │ │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ conv3d_3 (Conv3D) │ (None, 12, 12, 4, 256) │ 884,992 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ max_pooling3d_3 (MaxPooling3D) │ (None, 6, 6, 2, 256) │ 0 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ batch_normalization_3 │ (None, 6, 6, 2, 256) │ 1,024 │ │ (BatchNormalization) │ │ │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ global_average_pooling3d │ (None, 256) │ 0 │ │ (GlobalAveragePooling3D) │ │ │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ dense (Dense) │ (None, 512) │ 131,584 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ dropout (Dropout) │ (None, 512) │ 0 │ ├─────────────────────────────────┼───────────────────────────┼────────────┤ │ dense_1 (Dense) │ (None, 1) │ 513 │ └─────────────────────────────────┴───────────────────────────┴────────────┘

Total params: 1,352,897 (5.16 MB)

Trainable params: 1,351,873 (5.16 MB)

Non-trainable params: 1,024 (4.00 KB)

训练模型

# Compile model.

initial_learning_rate = 0.0001

lr_schedule = keras.optimizers.schedules.ExponentialDecay(

initial_learning_rate, decay_steps=100000, decay_rate=0.96, staircase=True

)

model.compile(

loss="binary_crossentropy",

optimizer=keras.optimizers.Adam(learning_rate=lr_schedule),

metrics=["acc"],

run_eagerly=True,

)

# Define callbacks.

checkpoint_cb = keras.callbacks.ModelCheckpoint(

"3d_image_classification.keras", save_best_only=True

)

early_stopping_cb = keras.callbacks.EarlyStopping(monitor="val_acc", patience=15)

# Train the model, doing validation at the end of each epoch

epochs = 100

model.fit(

train_dataset,

validation_data=validation_dataset,

epochs=epochs,

shuffle=True,

verbose=2,

callbacks=[checkpoint_cb, early_stopping_cb],

)

Epoch 1/100

70/70 - 40s - 568ms/step - acc: 0.5786 - loss: 0.7128 - val_acc: 0.5000 - val_loss: 0.8744

Epoch 2/100

70/70 - 26s - 370ms/step - acc: 0.6000 - loss: 0.6760 - val_acc: 0.5000 - val_loss: 1.2741

Epoch 3/100

70/70 - 26s - 373ms/step - acc: 0.5643 - loss: 0.6768 - val_acc: 0.5000 - val_loss: 1.4767

Epoch 4/100

70/70 - 26s - 376ms/step - acc: 0.6643 - loss: 0.6671 - val_acc: 0.5000 - val_loss: 1.2609

Epoch 5/100

70/70 - 26s - 374ms/step - acc: 0.6714 - loss: 0.6274 - val_acc: 0.5667 - val_loss: 0.6470

Epoch 6/100

70/70 - 26s - 372ms/step - acc: 0.5929 - loss: 0.6492 - val_acc: 0.6667 - val_loss: 0.6022

Epoch 7/100

70/70 - 26s - 374ms/step - acc: 0.5929 - loss: 0.6601 - val_acc: 0.5667 - val_loss: 0.6788

Epoch 8/100

70/70 - 26s - 378ms/step - acc: 0.6000 - loss: 0.6559 - val_acc: 0.6667 - val_loss: 0.6090

Epoch 9/100

70/70 - 26s - 373ms/step - acc: 0.6357 - loss: 0.6423 - val_acc: 0.6000 - val_loss: 0.6535

Epoch 10/100

70/70 - 26s - 374ms/step - acc: 0.6500 - loss: 0.6127 - val_acc: 0.6500 - val_loss: 0.6204

Epoch 11/100

70/70 - 26s - 374ms/step - acc: 0.6714 - loss: 0.5994 - val_acc: 0.7000 - val_loss: 0.6218

Epoch 12/100

70/70 - 26s - 374ms/step - acc: 0.6714 - loss: 0.5980 - val_acc: 0.7167 - val_loss: 0.5069

Epoch 13/100

70/70 - 26s - 369ms/step - acc: 0.7214 - loss: 0.6003 - val_acc: 0.7833 - val_loss: 0.5182

Epoch 14/100

70/70 - 26s - 372ms/step - acc: 0.6643 - loss: 0.6076 - val_acc: 0.7167 - val_loss: 0.5613

Epoch 15/100

70/70 - 26s - 373ms/step - acc: 0.6571 - loss: 0.6359 - val_acc: 0.6167 - val_loss: 0.6184

Epoch 16/100

70/70 - 26s - 374ms/step - acc: 0.6429 - loss: 0.6053 - val_acc: 0.7167 - val_loss: 0.5258

Epoch 17/100

70/70 - 26s - 370ms/step - acc: 0.6786 - loss: 0.6119 - val_acc: 0.5667 - val_loss: 0.8481

Epoch 18/100

70/70 - 26s - 372ms/step - acc: 0.6286 - loss: 0.6298 - val_acc: 0.6667 - val_loss: 0.5709

Epoch 19/100

70/70 - 26s - 372ms/step - acc: 0.7214 - loss: 0.5979 - val_acc: 0.5833 - val_loss: 0.6730

Epoch 20/100

70/70 - 26s - 372ms/step - acc: 0.7571 - loss: 0.5224 - val_acc: 0.7167 - val_loss: 0.5710

Epoch 21/100

70/70 - 26s - 372ms/step - acc: 0.7357 - loss: 0.5606 - val_acc: 0.7167 - val_loss: 0.5444

Epoch 22/100

70/70 - 26s - 372ms/step - acc: 0.7357 - loss: 0.5334 - val_acc: 0.5667 - val_loss: 0.7919

Epoch 23/100

70/70 - 26s - 373ms/step - acc: 0.7071 - loss: 0.5337 - val_acc: 0.5167 - val_loss: 0.9527

Epoch 24/100

70/70 - 26s - 371ms/step - acc: 0.7071 - loss: 0.5635 - val_acc: 0.7167 - val_loss: 0.5333

Epoch 25/100

70/70 - 26s - 373ms/step - acc: 0.7643 - loss: 0.4787 - val_acc: 0.6333 - val_loss: 1.0172

Epoch 26/100

70/70 - 26s - 372ms/step - acc: 0.7357 - loss: 0.5535 - val_acc: 0.6500 - val_loss: 0.6926

Epoch 27/100

70/70 - 26s - 370ms/step - acc: 0.7286 - loss: 0.5608 - val_acc: 0.5000 - val_loss: 3.3032

Epoch 28/100

70/70 - 26s - 370ms/step - acc: 0.7429 - loss: 0.5436 - val_acc: 0.6500 - val_loss: 0.6438

<keras.src.callbacks.history.History at 0x7fc5b923e810>

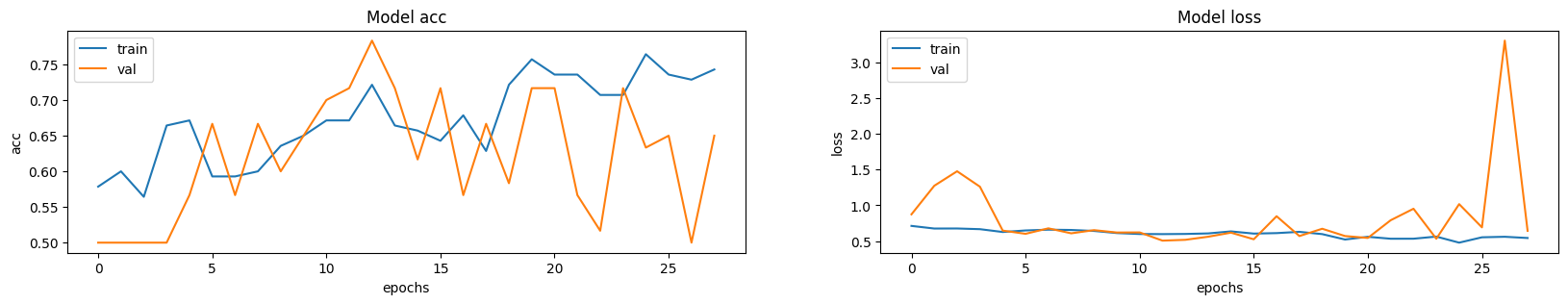

需要注意的是,样本数量非常少(只有 200 个),并且我们没有指定随机种子。因此,您可能会遇到显著的结果方差。包含超过 1000 个 CT 扫描的完整数据集可以在此处找到。使用完整数据集,达到了 83% 的准确率。在这两种情况下,分类性能的变异性约为 6-7%。

可视化模型性能

此处绘制了训练集和验证集的模型准确率和损失。由于验证集是类别平衡的,因此准确率提供了模型性能的无偏表示。

fig, ax = plt.subplots(1, 2, figsize=(20, 3))

ax = ax.ravel()

for i, metric in enumerate(["acc", "loss"]):

ax[i].plot(model.history.history[metric])

ax[i].plot(model.history.history["val_" + metric])

ax[i].set_title("Model {}".format(metric))

ax[i].set_xlabel("epochs")

ax[i].set_ylabel(metric)

ax[i].legend(["train", "val"])

对单个 CT 扫描进行预测

# Load best weights.

model.load_weights("3d_image_classification.keras")

prediction = model.predict(np.expand_dims(x_val[0], axis=0))[0]

scores = [1 - prediction[0], prediction[0]]

class_names = ["normal", "abnormal"]

for score, name in zip(scores, class_names):

print(

"This model is %.2f percent confident that CT scan is %s"

% ((100 * score), name)

)

1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 478ms/step

1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 479ms/step

This model is 32.99 percent confident that CT scan is normal

This model is 67.01 percent confident that CT scan is abnormal